Scrape Job Boards for B2B Sales Intelligence: The SDR Playbook (2026)

Every day, thousands of companies publish job postings that reveal exactly what they're about to buy. A company posting for a "Head of E-commerce" is about to invest in a new platform stack. A listing for a "Pricing Manager" signals budget allocated for competitive intelligence tools. If your sales team knows how to scrape job boards for B2B sales intelligence in 2026, these signals arrive before any inbound lead form — and before your competitors make the call.

This guide is written for SDRs and sales ops teams who want a practical, repeatable workflow. Not another API comparison. Not a developer tutorial. A playbook.

Why Job Postings Are the Highest-Intent B2B Buying Signal

A company doesn't open a new headcount without budget approval. By the time a job posting goes live on LinkedIn or Indeed, a decision has already been made internally: we need this function, and we have money to staff it. That's as strong a buying signal as a pricing page visit.

According to HubSpot's 2026 Sales Trends Report, teams that use intent signals in their outreach see 2.7x higher reply rates than those relying solely on cold list-building. Job postings are one of the most reliable intent signals available — and unlike third-party intent data, they're free and publicly accessible.

Here's why job boards beat most other signal sources:

- They're updated in real time. Most companies post within days of budget approval, giving you a narrow window to be first.

- They're specific. A job title tells you what the company is solving for — not just that they're "interested in your category."

- They're pre-qualified. If a company is hiring a RevOps Manager, they already understand the function. You don't have to sell the concept.

- They're persistent. A posting that stays live for 30 days signals a company actively trying to fill a need — follow up weekly, not once.

European B2B teams in Nordic and DACH markets have been slower to adopt intent-based prospecting, making early movers in Sweden, Germany, and the Netherlands particularly well-positioned right now. Companies hiring into these functions in Europe are rarer — and therefore more valuable as targets.

Which Job Titles Signal ICP Fit (And Which Roles to Monitor)

Not every open role is a buying signal for your product. The goal is to build a monitored keyword list that maps job titles to your ICP. Here's how that looks for a company selling competitive pricing intelligence or e-commerce data:

| Job Title | What It Signals |

|---|---|

| Head of E-commerce | Platform refresh, data stack investment |

| Pricing Manager / Analyst | Competitive pricing tools, data feeds |

| RevOps Manager | CRM enrichment, sales tooling |

| VP of Sales | Sales intelligence, prospecting tools |

| Market Intelligence Analyst | Data subscription or scraping service |

| Category Manager (Retail) | Price monitoring, supplier comparison data |

| Digital Commerce Director | Full-stack data layer including scraping |

This mapping is your "trigger dictionary." Every time a company in your ICP posts one of these roles, it enters your pipeline — not your general prospecting list, but a hot tier with a specific outreach message tied to the trigger.

Web scraping for lead generation works best when the signal is specific enough to personalize outreach. Job title monitoring gives you that specificity automatically.

Build your trigger dictionary before you configure any scraper. It's what makes the difference between a raw data feed and a sales intelligence system.

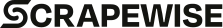

Which Job Boards to Scrape for B2B Sales Intelligence in 2026

Not all job boards are equal for B2B intelligence purposes. Here's the coverage breakdown:

LinkedIn Jobs is the highest-signal source for mid-market and enterprise targets. Decision-maker roles — VP, Director, Head of — are posted here more consistently than on any other platform. LinkedIn's anti-bot protection is aggressive, which means scraping JavaScript-heavy e-commerce sites methodology applies here too — dynamic rendering and session rotation are requirements, not optional extras.

Indeed and Glassdoor are better for SMB coverage and volume. If your ICP includes fast-growing regional retailers and mid-size operators, these boards surface companies that don't always post on LinkedIn.

ATS career pages (Greenhouse, Lever, Ashby, Workday) are the hidden gold mine. When a company uses an ATS, their open roles are published directly to a careers subdomain — for example, company.greenhouse.io/jobs. These pages update in real time and typically have lighter anti-scraping infrastructure than LinkedIn. A single Greenhouse scraper can cover thousands of mid-market companies.

Google Jobs aggregates across all of the above. It's useful for discovery and cross-referencing, but not a replacement for scraping sources directly — it doesn't expose company metadata in the same structured way.

Wellfound (formerly AngelList Talent) is the best source for Series A–C startups, particularly in European tech. Roles here often include company funding stage, team size, and investor data — all enrichment you'd otherwise build separately.

According to Backlinko's B2B intent data research, 74% of B2B purchase decisions are triggered by an internal business event. Hiring is one of those events — and it's publicly visible on job boards before any vendor relationship begins.

How to Design a Daily Job Board Monitoring Workflow

Scraping job boards once isn't a strategy — it's a dataset. The value is in monitoring: detecting new postings, deduplicating against your CRM, scoring by trigger strength, and routing to the right SDR.

Step 1: Define your ICP filter set

Start with firmographics — industry, company size, geography, revenue range. Then layer your trigger dictionary on top. You're not scraping all jobs; you're scraping companies that match your ICP and filtering for trigger titles.

Step 2: Schedule daily scrapes

Job postings age fast. A VP Sales role posted today may close in three weeks. Daily scraping ensures you catch postings within 24 hours of publication, giving you a window before competitors react. Weekly batch scrapes miss the signal entirely.

Step 3: Deduplicate against CRM and existing pipeline

New postings from accounts already in your CRM should route to the owning AE, not create a new SDR task. Deduplication by company domain is the standard approach. This is where most DIY implementations break down — deduplication logic across ATS platforms is non-trivial because the same company may post under multiple employer IDs across boards.

Step 4: Score by trigger strength

Not all triggers are equal. A company posting for a "VP Sales" and "Pricing Analyst" simultaneously is a stronger signal than one posting for an entry-level coordinator. Build a scoring model: senior titles score higher, recent postings score higher, companies with multiple concurrent trigger roles score highest.

Step 5: Route to CRM with context

The job posting itself is the context. The SDR's outreach message should reference the hiring signal without being explicit about it. "We noticed you're scaling your pricing function" is a natural opener if you've positioned yourself as a resource for that team. CRM enrichment should include the job title, posting date, source board, and a link to the original posting for reference.

E-commerce market data extraction follows a similar signal-to-action framework. The same principles — monitor, deduplicate, score, route — apply whether you're tracking product prices or hiring signals.

The Engineering Cost of DIY Job Board Scraping

This is the part that most "scrape job boards" guides skip. Building a job board scraper is accessible. Maintaining one at scale is an engineering project.

Here's what you're actually building:

- Parsers for each source (LinkedIn, Indeed, Greenhouse, Lever — all different schemas)

- Proxy rotation to avoid IP blocks (LinkedIn bans IP ranges aggressively)

- Session management for JavaScript-rendered pages

- Schema normalization across sources (same company, different data formats)

- Deduplication logic across boards

- Change detection (new posting vs. updated posting)

- Alert and delivery to Slack, webhook, or CRM API

- Monitoring and retry logic for failed scrapes

- Ongoing maintenance as job boards update their anti-bot measures

According to Semrush's enterprise data research, teams that underestimate scraping infrastructure costs typically spend 3–6x more than planned on maintenance once the initial build is complete. LinkedIn alone ships anti-bot updates multiple times per year, breaking parsers without warning.

For sales teams, this is a classic build-vs-buy question. If scraping infrastructure isn't your core product, the engineering overhead of owning it compounds over time.

How ScrapeWise Delivers Job Board Data as a Managed Feed

Rather than building and maintaining scrapers across six sources, ScrapeWise handles the infrastructure layer — proxy rotation, parser maintenance, anti-bot handling, schema normalization — and delivers a clean, deduplicated feed of job postings matched to your ICP filter.

You configure the filter: target industries, company size range, geographies, trigger job titles, minimum seniority level. ScrapeWise runs the daily scrapes, normalizes output across sources, and delivers it to your CRM or data warehouse via webhook or API.

The result is a sales intelligence layer that your SDR team actually uses, rather than a data project that stalls in engineering backlogs.

If you're already using product data extraction for price monitoring, job board monitoring runs on the same managed infrastructure — no new tooling required.

Turning Job Posting Data Into Outreach That Converts

Raw data doesn't close deals. The job posting is the trigger; the outreach is the conversion mechanism.

Lead with the business problem, not the hire. "Saw you're scaling your pricing team — most pricing managers we talk to inherit a manual process that can't keep up with SKU growth. Happy to share how a similar retailer solved that in 30 days." That's a conversation starter. "Congratulations on the new hire" is noise.

Time the outreach to the posting. A posting that went live yesterday warrants same-day outreach if your SDR sees it. A three-week-old posting is still worth a touch, but the window for "first call" positioning has narrowed. Use posting date as a sequencing input in your CRM.

Stack signals. A company that's hiring a Pricing Manager, shows up in your target account list, and is in a growth-stage European market is a much stronger prospect than a single-signal trigger. Build your scoring model to surface stacked-signal accounts at the top of the queue.

Personalize to the function, not the company. You often don't know who reviewed the budget or who will evaluate your tool — but you know the hiring manager's function. Write outreach that resonates with a Pricing Manager's pain points: data freshness, SKU volume, cross-market coverage. Let the job posting tell you what they care about.

Building a Sustainable Job Board Intelligence System

The SDR teams that get the most value from job board scraping treat it as a system, not a one-time list pull.

Run weekly trigger dictionary reviews. As your ICP evolves, the job titles that signal fit evolve too. Review monthly which titles produced pipeline versus noise and update your filter set accordingly.

Build closed-loop feedback. When a deal closes, trace it back to the trigger. Was it a job posting? Which board? Which title? This data tells you which signals to prioritize and which to deprioritize in your scoring model.

Expand geographically by adding filters, not rebuilding. Once the workflow is running for your core market, expanding to Germany or the Netherlands is a filter change — not an infrastructure rebuild. ATS platforms like Personio (dominant in DACH markets) can be added to coverage with minimal lift on a managed infrastructure.

Expand ATS coverage over time. Greenhouse, Lever, and Ashby together cover the majority of Series B+ companies across Europe and the US. Adding Workday and SAP SuccessFactors significantly expands enterprise coverage without rewriting your core scraping logic.

The companies winning at outbound in 2026 aren't sending more emails — they're sending better-timed ones. Scraping job boards for B2B sales intelligence gives SDRs one of the clearest, most actionable signals available. The workflow above is where to start.

Paste any URL — ScrapeWise handles the anti-bot

Managed infrastructure that adapts when sites change. No proxies, no code, no per-request fees.

97% accuracy on Amazon benchmarks · no credit card · book a 15-min call →