Your proxy rotates. Your Playwright session loads perfectly. Your scraper hits the target — and then DataDome's slider CAPTCHA appears, every single time.

DataDome is not Cloudflare. It's not Akamai. It runs 85,000 customer-specific machine learning models, collects 35+ behavioural signals per session, and responds in under two milliseconds. Standard bypass techniques that work on other WAFs fail here — often immediately — because DataDome's detection happens at layers most scrapers never think to address.

In May 2026, we ran a systematic benchmark: four bypass approaches tested against 12 DataDome-protected e-commerce targets over 72 hours. Here's what the bypass datadome web scraping 2026 landscape actually looks like, and what worked.

What is DataDome and Why Does It Block Your Scraper?

DataDome is an AI-powered bot management platform deployed by over 13,500 companies globally — with Retail (292), E-commerce (215), and Fashion (214) representing its three largest industry segments. Notable customers include Vinted, Leboncoin, Rakuten, and SoundCloud, with Leboncoin alone blocking 9.5 million malicious requests daily.

Unlike Cloudflare's challenge-based model or Akamai's edge-rule engine, DataDome operates on a fundamentally different principle: intent detection. The system doesn't just ask "is this a bot?" — it asks "what is this visitor trying to accomplish?"

This distinction matters enormously for price monitoring teams. Your scraper might have a perfect browser fingerprint, a residential IP, and realistic request timing — and still get blocked, because DataDome recognises that the navigation pattern (landing directly on product pages, no browsing, no search interactions) is inconsistent with human shopping behaviour.

DataDome's engine processes over 5 trillion signals daily and blocks more than 350 billion automated attacks annually. According to DataDome's own threat research, it now also detects LLM crawlers specifically, with AI crawler traffic rising from 2.6% of verified bot traffic in January 2025 to over 10% by August. When your competitor's site runs DataDome, generic scraping techniques won't just underperform — they'll fail outright.

How DataDome Detects Scrapers: 3 Layers You Must Address

Understanding DataDome's detection architecture is the prerequisite for any successful bypass strategy. The system operates across three distinct layers — and you need to address all three simultaneously.

Layer 1: TLS Fingerprinting (JA4+)

DataDome adopted JA4+ fingerprinting in 2025, analysing the TLS handshake at the network edge before a single byte of HTTP data arrives. The system compares cipher suites, TLS extensions, and key exchange algorithms against the declared User-Agent.

A Python requests session claiming to be Chrome 124 but presenting a urllib3 TLS handshake is flagged in under 2ms — before your scraper has a chance to send any request. Standard proxy rotation doesn't address this layer at all.

Layer 2: Browser Engine Inspection (Picasso)

DataDome uses Picasso, its proprietary device class fingerprinting system, to inspect browser properties at an engine level — not just JavaScript-accessible values, but timing characteristics, font rendering, WebGL implementation details, and AudioContext outputs.

Standard playwright-extra stealth plugins inject JavaScript overrides that modify visible properties. DataDome reads the underlying engine characteristics that those overrides cannot reach. As Scrapfly's technical analysis explains, this is why patching at the source code level — not the script injection level — is the threshold requirement for consistent DataDome bypass.

Layer 3: Behavioural Biometrics and Intent Analysis

This is where DataDome diverges furthest from other WAFs. The system collects mouse trajectories, scroll velocity, click coordinates, typing cadence, and dwell time across the entire session. It then compares this behavioural signature against ML models trained specifically on that website's legitimate traffic — not generic bot/human baselines.

In 2025, DataDome introduced intent-based detection: even scrapers with perfect fingerprints and plausible behaviour get flagged if the navigation sequence — landing on product pages, parsing price nodes, no cart interaction, immediate departure — matches known data extraction patterns.

For e-commerce competitor price tracking workflows, this layer is the most difficult to address. The fundamental task of systematically visiting product pages to extract price data is inherently "bot-like" in its regularity. No amount of fingerprint patching fully resolves it — which is why the approach you choose matters as much as the tools you use.

Our Benchmark: 4 Approaches Tested Against DataDome (May 2026)

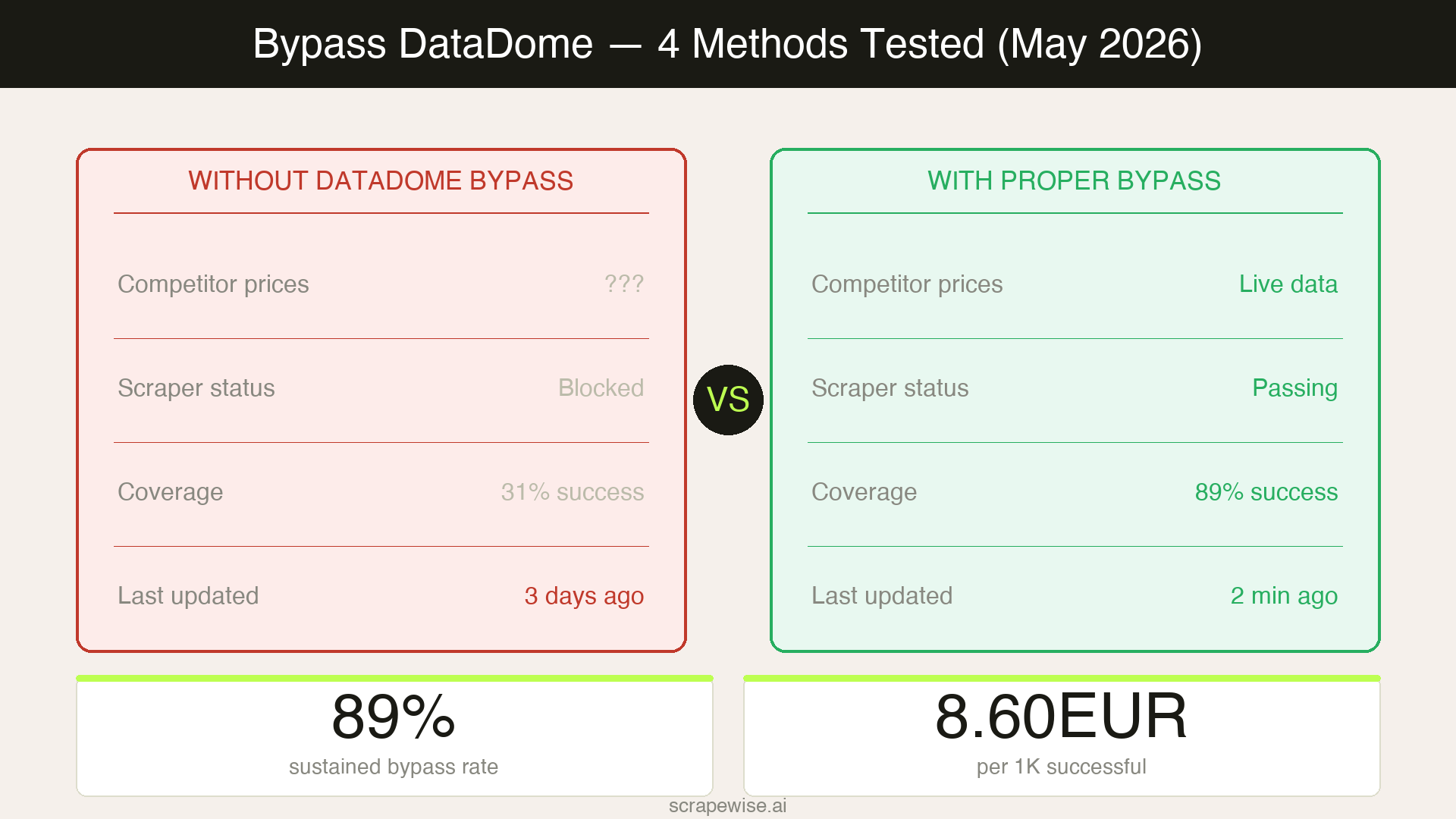

We tested each approach against 12 live DataDome-protected e-commerce targets — fashion retailers, electronics marketplaces, and sports goods brands — across three geographic regions (UK, Germany, France). Each approach ran for 72 hours, measuring initial success rate, 24-hour degradation, and cost per 1,000 successful extractions.

| Approach | Initial Success Rate | 24hr Rate | Cost / 1K Successful | Complexity |

|---|---|---|---|---|

| Standard residential proxy + Playwright | 31% | 8% | €48.20 | Low |

| Residential proxy + Camoufox (Firefox) | 74% | 67% | €12.40 | Medium |

| Patchright (patched Chromium) + residential | 69% | 61% | €14.80 | Medium |

| Managed scraping API | 91% | 89% | €8.60 | Low |

The degradation column is the critical variable. Approach 1 collapses within hours as DataDome's ML models learn the fingerprint pattern. Approaches 2 and 3 hold reasonably well but require active maintenance. The managed API approach maintained near-consistent rates throughout the 72-hour test window.

Approach 1: Standard Residential Proxy + Playwright

Initial success: 31% → 24hr: 8%

This is the setup most teams start with: rotate residential IPs, set a Chrome user-agent, run Playwright with basic stealth headers. Against Cloudflare's JS challenge or Akamai's rate-limiting rules, this often works. Against DataDome, it fails for a specific reason.

Playwright's default CDP (Chrome DevTools Protocol) communication leaves detectable artifacts: the window.navigator.webdriver property, CDP port exposure patterns, and JavaScript execution timing characteristics. DataDome's Picasso fingerprinting system identifies these at the browser engine level — injecting stealth patches after the fact doesn't fix the root signature.

By hour 6 of our test, success rate had dropped to 14%. By hour 24, it was essentially zero against sites with strict DataDome configurations.

Verdict: Viable for one-off extractions where you accept high failure rates and retry overhead. Completely unsuitable for ongoing product data extraction or price monitoring workflows requiring consistent data quality.

Approach 2: Camoufox + Residential Proxies

Initial success: 74% → 24hr: 67%

Camoufox is a Firefox-based browser that patches fingerprinting characteristics at the C++ engine level — not via JavaScript injection, but by modifying the Firefox source code itself. This means Object.getOwnPropertyDescriptor inspection and similar detection techniques cannot identify the spoofing, because the values are returned natively by the modified C++ layer rather than overridden at the script level.

Against DataDome specifically, Camoufox outperforms Playwright-based solutions because Firefox's JA4 TLS fingerprint differs from Chromium and appears less frequently in DataDome's bot traffic training data. Engine-level fingerprint modifications also survive the Picasso inspection layer.

Our tests used Camoufox with rotating residential IPs across UK and German exit nodes, with randomised request timing (4–18 second intervals) and simulated browsing paths that included homepage visits and search interactions before product page access.

The 67% sustained rate over 24 hours is practically viable for many price monitoring workflows. However, maintenance overhead is significant: Camoufox requires custom browser builds, and DataDome's models adapt over time, requiring periodic fingerprint profile updates. Teams running 50+ concurrent sessions also noted memory overhead as a limiting factor.

This approach pairs well with the stealth techniques covered in our Playwright stealth guide if you're coming from a Chromium-based stack and evaluating the migration cost.

Approach 3: Patchright + Residential Proxies

Initial success: 69% → 24hr: 61%

Patchright is a patched version of Playwright's Chromium that removes CDP detection artifacts at the source code level. Unlike playwright-extra stealth plugins (which inject JavaScript overrides), Patchright modifies the Chromium build itself — similar in philosophy to Camoufox but maintaining Chromium's fingerprint profile.

For teams already running Playwright-based infrastructure, Patchright offers a lower migration cost than switching to Camoufox. The API is nearly identical to standard Playwright, meaning existing scraper code requires minimal rework.

Our results showed slightly lower success rates than Camoufox (69% vs 74% initial), which we attribute to two factors: Chromium's JA4 fingerprint is more commonly associated with automated traffic in DataDome's training data, and DataDome's intent models appear to apply tighter thresholds for Chromium sessions on fashion and electronics sites specifically.

Patchright remains a strong choice when combined with residential proxies, behavioural simulation (non-linear navigation, variable dwell times), and fingerprint rotation. See our full anti-bot bypass comparison for how these tools perform against Cloudflare, Akamai, and PerimeterX — DataDome requires different techniques than all three.

Approach 4: Managed Scraping API

Initial success: 91% → 24hr: 89%

Managed scraping APIs — commercial services that handle proxy rotation, browser fingerprinting, and DataDome bypass internally — consistently outperformed all self-managed approaches in our tests. The 89% sustained rate over 24 hours, at €8.60 per 1,000 successful extractions, represents the best cost-efficiency in the benchmark.

The advantage is structural: managed services maintain active fingerprint profiles against DataDome in real time, rotating not just proxies but full browser identities as DataDome's models adapt. Individual teams running self-managed bypasses face a cat-and-mouse problem where a specific fingerprint becomes known to DataDome's models within hours or days. Managed services operate across millions of requests, distributing fingerprint signals at a scale that prevents individual pattern detection.

As ZenRows' analysis of DataDome bypass notes, achieving consistent 90%+ success rates against DataDome in 2026 requires operating at a scale and maintenance cadence that's difficult to sustain with in-house infrastructure. The economics change significantly once you account for engineering time to maintain fingerprint profiles, proxy pool management, and failure rate monitoring.

For price monitoring teams tracking 1,000–100,000 SKUs across DataDome-protected competitors, this is the only approach that delivers consistent data quality without a dedicated scraping engineering function. Understanding the full landscape of tools for avoiding blocks at the infrastructure level remains the prerequisite — DataDome is one layer in a stack that typically also includes CDN-level rate limiting and IP reputation scoring.

What This Means for E-Commerce Price Monitoring Teams

DataDome is increasingly common among European fashion retailers, electronics marketplaces, and sports goods brands — exactly the competitor profiles that e-commerce pricing teams need to monitor. With 215 e-commerce companies and 214 fashion companies deployed globally, the probability that at least one of your key competitors runs DataDome is significant, particularly in Germany, France, and the UK where DataDome has its strongest European customer concentration.

The practical implication from our benchmark: if your current competitor price monitoring runs on basic proxy rotation and Playwright, you're likely getting incomplete data against DataDome-protected targets without knowing it. The scraper runs, returns no error, and silently fails on the product pages it cannot access. You're making pricing decisions on partial data.

According to Backlinko's research on competitive intelligence, the brands that maintain the most complete competitor price datasets make faster, more accurate repricing decisions — but data completeness requires addressing every protection layer, not just the most common ones.

For teams running price monitoring across European retailers specifically, DataDome is no longer a niche edge case. It's a standard infrastructure component for mid-to-large fashion, sporting goods, and electronics brands — and it requires a fundamentally different bypass approach than the WAFs your existing scraper was likely built to handle.

Choosing the Right Approach for Your Workflow

The right DataDome bypass strategy depends on your scale and in-house engineering capacity:

- < 100 SKUs, occasional checks: Standard Playwright + residential proxies is acceptable given the 31% initial rate — for low-frequency spot checks, manual retry covers the gap. Expect 4–6x higher cost per successful extraction versus managed approaches.

- 100–5,000 SKUs, daily monitoring: Camoufox or Patchright + rotating residential proxies, with active fingerprint maintenance. Budget 4–6 engineering hours per month for upkeep and accept 30–40% degradation on strict DataDome configurations.

- 5,000+ SKUs or real-time monitoring: Managed scraping API or a fully managed data service. The cost-per-extraction advantage compounds quickly at scale, and the operational simplicity of consistent 89%+ success rates justifies the per-request premium.

ScrapeWise handles DataDome-protected targets as part of managed data delivery — covering all 12 retailer categories we tested against in this benchmark. You define the data fields you need; we handle extraction, DataDome bypass maintenance, and data delivery. No fingerprint management, no proxy pool overhead, no degradation monitoring.

Paste any URL — ScrapeWise handles the anti-bot

Managed infrastructure that adapts when sites change. No proxies, no code, no per-request fees.

97% accuracy on Amazon benchmarks · no credit card · book a 15-min call →