Your scraper worked perfectly last Tuesday. Today it's throwing errors, your pricing data is 48 hours stale, and your developer is burning a Friday night diagnosing a DOM change on a competitor's product page. Sound familiar? Scraper maintenance has quietly become one of the most expensive line items in data operations — and most teams don't even track it. This post breaks down exactly what that cost looks like, and why self-healing scraper infrastructure is the fix that changes the economics entirely.

What Is Scraper Maintenance — and Why Does It Keep Breaking?

Traditional scrapers work by targeting specific HTML elements on a page — a CSS class name, an XPath selector, a div ID. When the website owner updates their frontend (which modern e-commerce sites do constantly), those selectors break. The scraper stops delivering data. Someone has to fix it manually.

This is what engineers call "selector rot" — and it's endemic. According to ScrapeOps' 2025 Web Scraping Market Report, in some industries 10–15% of scrapers require weekly fixes due to DOM shifts, fingerprinting changes, or endpoint throttling. That's not an edge case. That's a weekly tax on your data team.

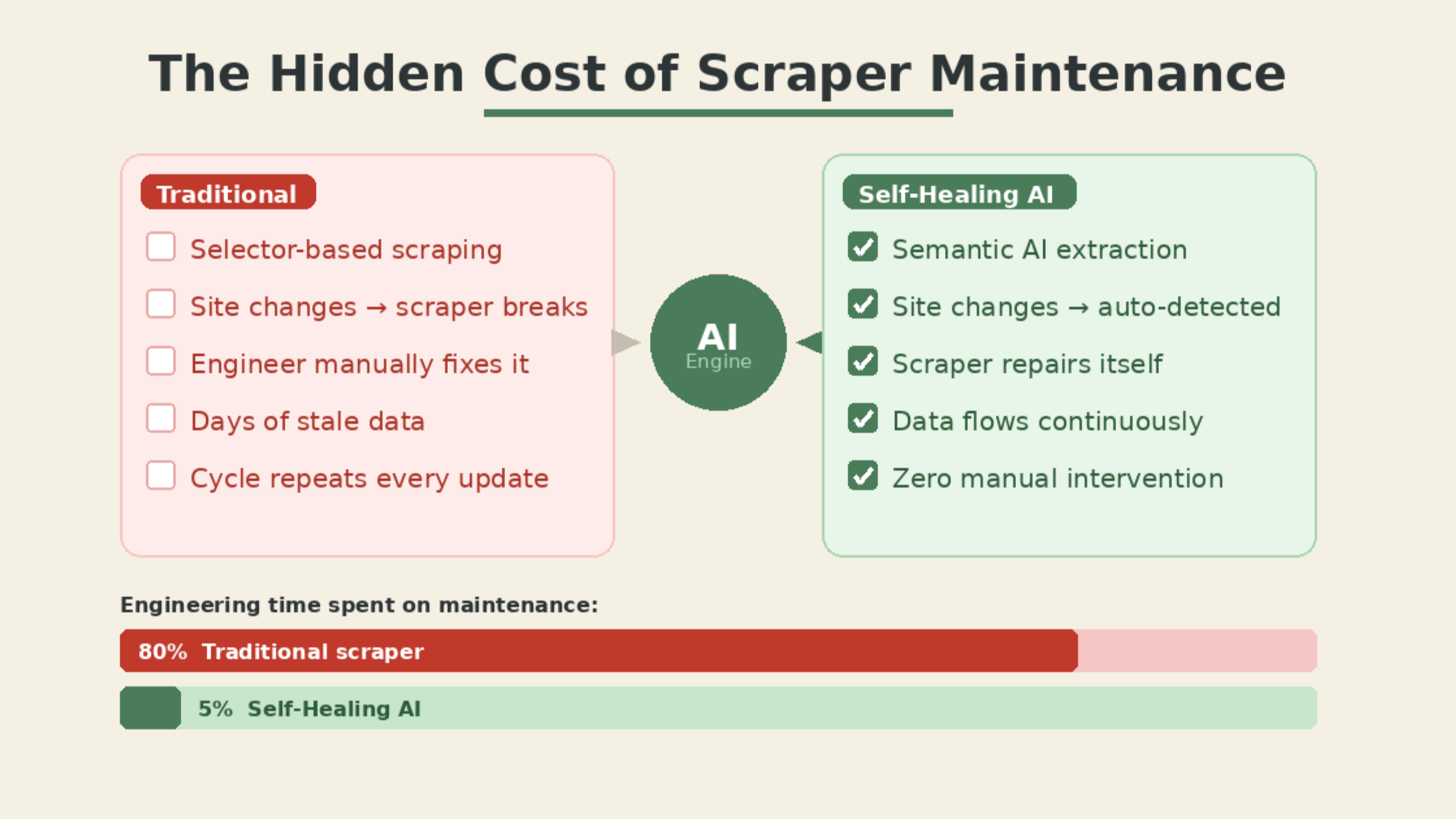

The maintenance cycle looks like this:

- A site redesigns a product page template

- Your selector targets the old class name, returns null

- Your downstream pricing dashboard fills with blanks or crashes

- An engineer gets alerted, investigates, rewrites the selector

- Two to three days of data are lost or corrupted

- Repeat in six weeks when the site updates again

For businesses monitoring dozens or hundreds of competitor sites, this cycle becomes a full-time job — before you ever touch the actual data.

The Real Cost Nobody Measures

The cost of scraper maintenance is largely invisible because it hides inside engineering time, not infrastructure bills. But when you add it up, the numbers are significant.

Kadoa's 2026 analysis of enterprise scraping teams found that teams operating traditional scrapers spend roughly 80% of their time on maintenance and only 20% actually building new capabilities or using the data. With AI-powered self-healing approaches, that ratio inverts — teams spend around 5% on setup and 95% on using what they extract.

Think about what that means in practice. A mid-size e-commerce company with one data engineer earning €60K/year is effectively spending €48K of that salary on fixing broken selectors. Not on analysis. Not on pricing strategy. On maintenance.

Additional hidden costs include:

- Stale data risk: Pricing decisions made on 48-hour-old data when competitors are repricing daily

- Monitoring overhead: Engineering time building alerting systems just to know when scrapers break

- Opportunity cost: Competitive intelligence gaps during outage windows

- Infrastructure sprawl: Increasing proxy and compute spend as scrapers grow more complex to compensate for brittleness

For businesses relying on automated price monitoring as a core competitive tool, these outages aren't just inconvenient — they translate directly into margin losses.

How Self-Healing Scraper Infrastructure Works

Self-healing scrapers solve the root cause, not the symptom. Instead of targeting rigid HTML selectors that break when pages change, they use AI — specifically vision models and large language models — to understand pages semantically.

Rather than looking for div.product-price.main, a vision-based scraper looks at the rendered page the way a human does and identifies "this is where the price appears" based on visual and semantic context. When the site moves that price field from the sidebar to the center column, the scraper still finds it. It doesn't need to be told where to look — it understands what it's looking for.

The practical architecture looks like this:

- Initial extraction: AI generates deterministic scraping code based on page structure and intent

- Monitoring layer: Agents continuously test extraction outputs against expected patterns

- Auto-repair: When anomalies are detected (null values, schema drift, layout changes), the AI regenerates the extraction logic automatically

- Zero human intervention: The data pipeline keeps running through site changes

McGill University researchers tested this across 3,000 pages on Amazon, Cars.com, and Upwork. AI-based extraction maintained 98.4% accuracy even as page structures changed — compared to traditional selector-based scrapers which degraded significantly after any frontend update.

Platforms like ScrapeWise.ai are built on this architecture, offering zero-maintenance competitive intelligence pipelines specifically for e-commerce teams that can't afford the downtime of traditional scrapers.

Why E-Commerce Teams Feel This Pain Most

E-commerce sits at the intersection of every factor that makes scraper maintenance expensive. Competitors update product pages frequently. Pricing changes daily. Anti-bot defenses evolve constantly. And the business impact of stale data is immediate and measurable — unlike in industries where intelligence cycles are slower.

According to Ahrefs' analysis of competitive intelligence workflows, businesses that maintain continuous, accurate competitor data make pricing adjustments 3–4x more frequently than those relying on manual or intermittent collection. That cadence only works if your data pipeline is reliable.

The specific maintenance triggers in e-commerce are predictable:

- Seasonal site refreshes: Retailers redesign pages for major sale periods, breaking scrapers ahead of the periods when data is most valuable

- Platform migrations: Shopify-to-custom or WooCommerce migrations often change the entire DOM structure overnight

- A/B testing: Modern e-commerce sites run constant frontend tests that can change element placement and class names without any coordinated announcement

Each of these is a maintenance event under the traditional model. Under a self-healing architecture, they become non-events. The e-commerce market data extraction pipeline keeps running.

The Build vs. Buy Calculation Has Changed

Three years ago, building self-healing scraper infrastructure in-house was a reasonable consideration for well-resourced engineering teams. The tooling was immature and the economics could work for large enough data programs.

In 2026, that calculation has shifted. The cost of managed self-healing infrastructure has dropped significantly, while the complexity of building it from scratch has increased. Anti-bot systems from Cloudflare, Akamai, and DataDome now deploy TLS fingerprinting and behavioral analysis that require specialized knowledge to bypass legally and reliably. Proxy management at scale is its own engineering discipline. Building and maintaining all of this alongside the AI layer that powers self-healing is a multi-year engineering project.

For most e-commerce teams, the right question is no longer "can we build this?" but "is building this our competitive advantage?"

If your business competes on pricing intelligence, assortment strategy, or market responsiveness — not on scraping infrastructure — then the build path is a distraction. Turning websites into reliable data APIs is infrastructure, not strategy. The strategy is what you do with the data.

Backlinko's analysis of operational efficiency in SaaS businesses consistently shows that teams which eliminate infrastructure maintenance from their core workflows allocate significantly more time to growth activities. The same principle applies to data teams.

Moving From Reactive to Predictive Operations

The shift from traditional to self-healing scraper infrastructure isn't just a maintenance fix — it changes the operational posture of your entire data program.

Traditional scraper operations are reactive. You find out something is broken when the data stops arriving or when a dashboard shows gaps. You fix it. You wait for the next break.

Self-healing infrastructure enables predictive operations. Monitoring runs continuously. Anomalies surface before they cascade into pipeline failures. The system documents what changed, when, and how it adapted — giving you a change log of competitor site updates as a side effect of simply keeping your scraper running.

That change log is itself valuable intelligence. When a competitor migrates their entire product catalog structure, that's a signal. When pricing elements start loading via a new JavaScript framework, that suggests a platform change. The metadata of maintenance becomes market intelligence.

For real-time analytics across retail and wholesale, this kind of continuous, self-maintaining pipeline is the foundation that makes everything else possible.

Conclusion

Scraper maintenance isn't a technical inconvenience — it's a recurring tax on your data team's time and your business's decision-making speed. The industry has moved. Self-healing, AI-native infrastructure isn't a premium feature anymore; it's becoming the baseline expectation for teams that need reliable competitive intelligence.

The question isn't whether your current scrapers will break again. They will. The question is whether you'll keep paying engineers to fix them, or whether you'll build a data program that runs without that tax.

If you're evaluating what zero-maintenance competitive intelligence looks like in practice, ScrapeWise.ai is worth exploring — built specifically for e-commerce teams that need pricing and product data that stays current through every site change.

Paste any URL — ScrapeWise handles the anti-bot

Managed infrastructure that adapts when sites change. No proxies, no code, no per-request fees.

97% accuracy on Amazon benchmarks · no credit card · book a 15-min call →